The Challenge of Scaling AI for Ads

Meta has long been at the forefront of using cutting-edge artificial intelligence to power its recommendation systems, particularly for advertisements. The company's constant push to improve user experiences and advertiser outcomes has led to a monumental scaling effort: bringing large language model (LLM)-level complexity into the real-time ad ranking engine. This ambition, however, collides with a fundamental obstacle known as the inference trilemma.

The Inference Trilemma: Balancing Scale, Speed, and Cost

As Meta expands its ad models to LLM scale, it faces a three-way conflict. On one side, greater model complexity demands more computational power and memory. On another, the service must maintain sub-second latency for billions of users worldwide. Finally, all this must happen cost-effectively. Traditional "one-size-fits-all" inference fails to reconcile these forces. Meta's solution is the Adaptive Ranking Model, which dramatically shifts the balance.

Meta Adaptive Ranking Model: Smarter, Not Just Bigger

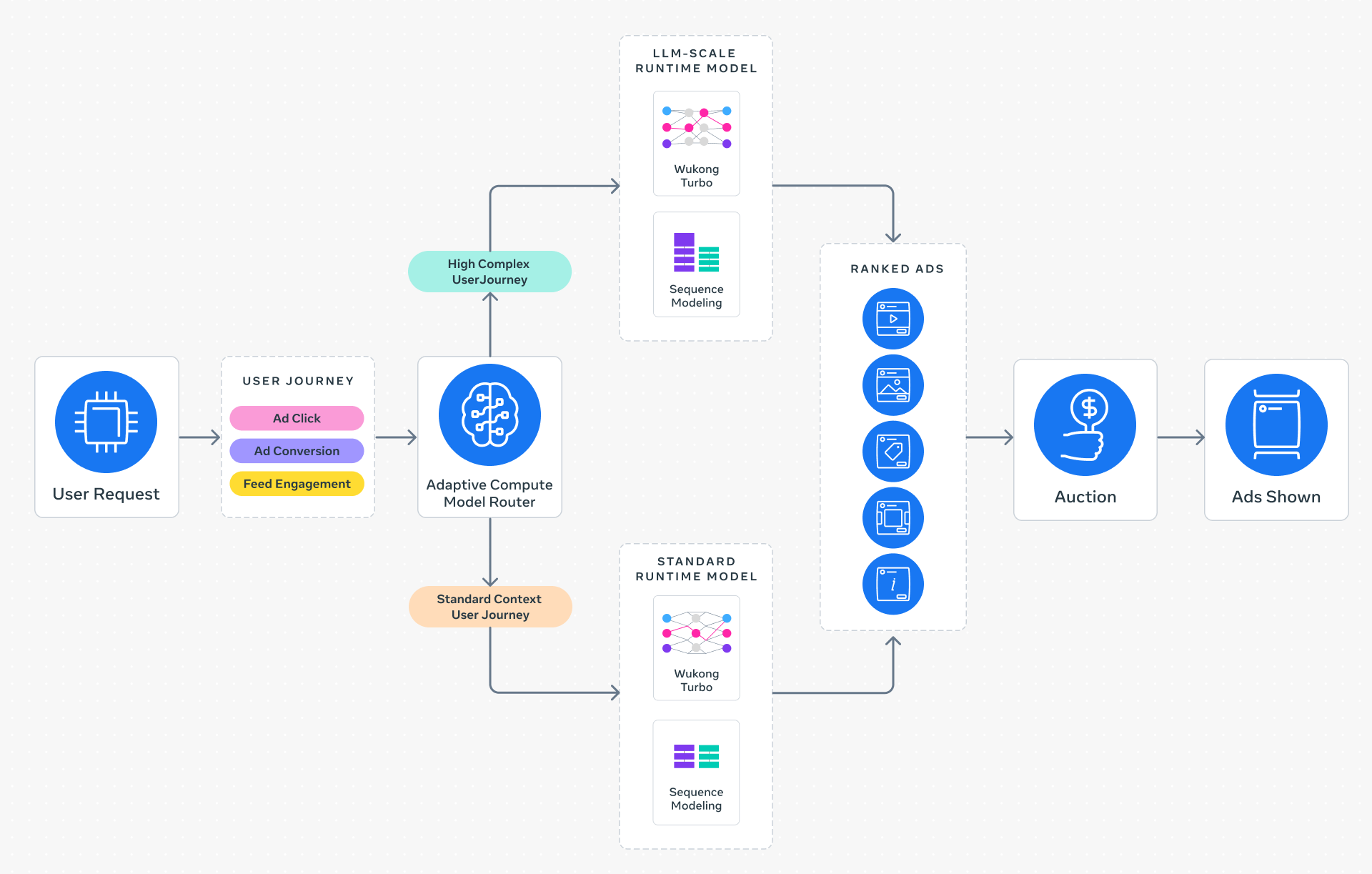

Instead of applying a single, monolithic model to every ad request, the Adaptive Ranking Model introduces intelligent request routing. It dynamically assesses each user's context—such as recent activity, session history, and inferred intent—and selects the most appropriate model variant. This means simple queries can be handled by lightweight models, while complex ones tap into the full power of LLM-scale networks. The result is strict latency adherence without sacrificing depth of understanding.

How It Works

The system replaces static inference with an adaptive pipeline. A lightweight classifier first routes requests based on complexity. Then, the chosen model runs on optimized hardware tailored to its size. This approach ensures every person receives a high-quality experience, and every ad dollar works harder.

Three Key Innovations Powering the Shift

To make this vision practical at Meta's scale, the team re-engineered the entire inference stack from the ground up. Three innovations stand out:

1. Inference-Efficient Model Scaling

By adopting a request-centric architecture, the Adaptive Ranking Model can serve LLM-scale models in under a second. This enables a richer understanding of user interests and intent without degrading the real-time experience. The architecture prioritizes the most important computations for each request, avoiding wasteful operations.

2. Model/System Co-Design

Meta developed hardware-aware model architectures that align neural network design with the capabilities of underlying silicon—GPUs, custom accelerators, and memory hierarchies. This co-design maximizes hardware utilization across heterogeneous environments, reducing wasted cycles and energy.

3. Reimagined Serving Infrastructure

Leveraging multi-card and multi-GPU setups, the new serving infrastructure supports models with up to one trillion parameters. Hardware-specific optimizations—such as fused kernels and efficient data movement—allow these massive networks to run with unprecedented efficiency, making LLM-scale ad ranking a reality.

Proven Results in Production

The Adaptive Ranking Model launched on Instagram in the fourth quarter of 2025. Early results confirm its impact: a +3% increase in ad conversions and a +5% boost in ad click-through rate for targeted users. These gains come without increasing overall system compute costs, thanks to the adaptive routing. Advertisers see higher returns, while users continue to enjoy fast, relevant suggestions.

Conclusion: The Future of Real-Time Ads

Meta's Adaptive Ranking Model represents a paradigm shift in serving large-scale AI for real-time systems. By bending the inference scaling curve, it resolves the trilemma of complexity, latency, and cost. As the platform continues to integrate LLM-level intelligence, both users and businesses can expect ever more personalized and efficient advertising experiences.